Before we learn anything about ETL Testing its important to learn about Business Intelligence and Dataware. Let’s get started – What is BI? Business Intelligence is the process of collecting raw data or business data and turning it into information that is useful and more meaningful. The raw data is the records of the daily transaction of an organization such as interactions with customers, administration of finance, and management of employee and so on.

These data’s will be used for “Reporting, Analysis, Data mining, Data quality and Interpretation, Predictive Analysis”. What is Data Warehouse? A data warehouse is a database that is designed for query and analysis rather than for transaction processing. The data warehouse is constructed by integrating the data from multiple heterogeneous sources.It enables the company or organization to consolidate data from several sources and separates analysis workload from transaction workload. Data is turned into high quality information to meet all enterprise reporting requirements for all levels of users.

ETL stands for Extract-Transform-Load and it is a process of how data is loaded from the source system to the data warehouse. Data is extracted from an OLTP database, transformed to match the data warehouse schema and loaded into the data warehouse database. Many data warehouses also incorporate data from non-OLTP systems such as text files, legacy systems and spreadsheets. Let see how it works For example, there is a retail store which has different departments like sales, marketing, logistics etc. Each of them is handling the customer information independently, and the way they store that data is quite different. The sales department have stored it by customer’s name, while marketing department by customer id. Now if they want to check the history of the customer and want to know what the different products he/she bought owing to different marketing campaigns; it would be very tedious.

The solution is to use a Datawarehouse to store information from different sources in a uniform structure using ETL. ETL can transform dissimilar data sets into an unified structure.Later use BI tools to derive meaningful insights and reports from this data.

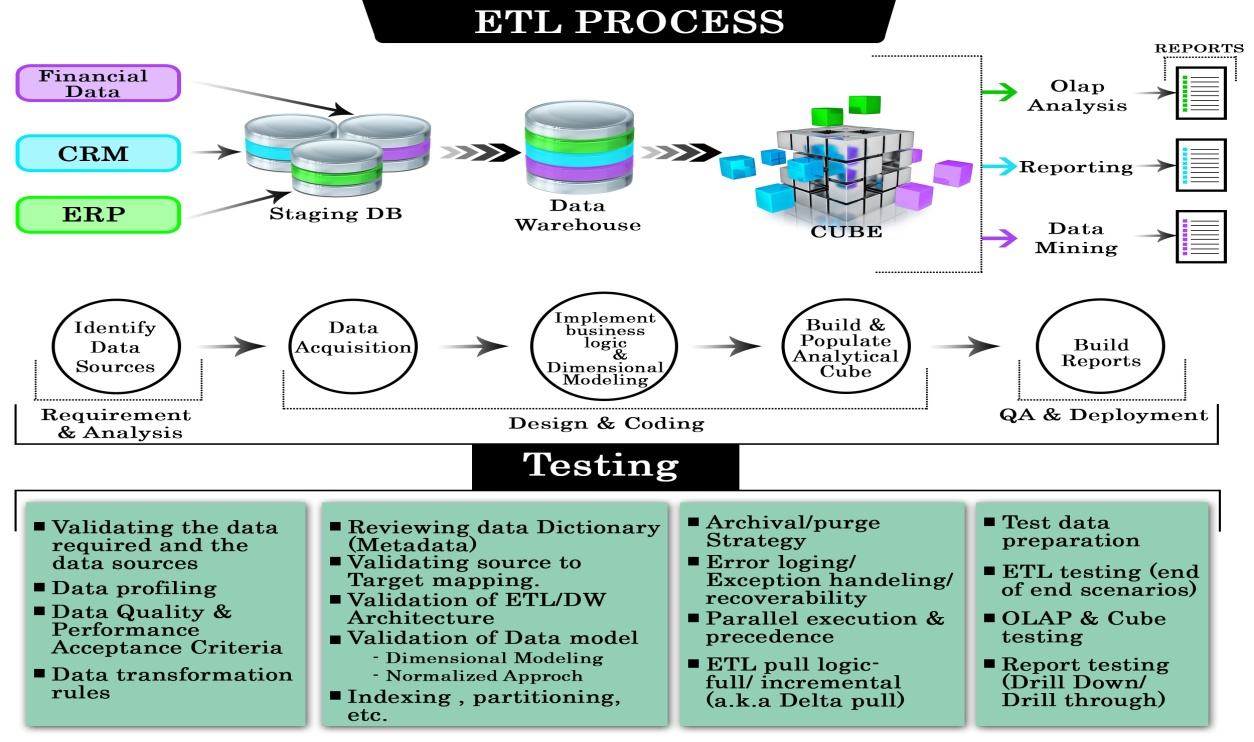

The following diagram gives you the ROAD MAP of the ETL process. Extract.

Extract relevant data. Transform. Transform data to DW (Data Warehouse) format. Build keys - A key is one or more data attributes that uniquely identify an entity. Various types of keys are primary key, alternate key, foreign key, composite key, surrogate key.

The datawarehouse owns these keys and never allows any other entity to assign them. Cleansing of data:After the data is extracted, it will move into the next phase, of cleaning and conforming of data. Cleaning does the omission in the data as well as identifying and fixing the errors.

ETL testing is a key aspect of data warehouse, data migration and data integration projects. It requires a data-centric testing approach.

Conforming means resolving the conflicts between those data’s that is incompatible, so that they can be used in an enterprise data warehouse. In addition to these, this system creates meta-data that is used to diagnose source system problems and improves data quality. Load. Load data into DW ( Data Warehouse).

Build aggregates - Creating an aggregate is summarizing and storing data which is available in fact table in order to improve the performance of end-user queries. What is ETL Testing? ETL testing is done to ensure that the data that has been loaded from a source to the destination after business transformation is accurate. It also involves the verification of data at various middle stages that are being used between source and destination. ETL stands for Extract-Transform-Load.

ETL Testing Process Similar to other Testing Process, ETL also go through different phases. The different phases of ETL testing process is as follows ETL testing is performed in five stages.

Identifying data sources and requirements. Data acquisition. Implement business logics and dimensional Modelling. Build and populate data. Build Reports Types of ETL Testing Types Of Testing Testing Process Production Validation Testing “Table balancing” or “production reconciliation” this type of ETL testing is done on data as it is being moved into production systems.

To support your business decision, the data in your production systems has to be in the correct order. Data Validation Option provides the ETL testing automation and management capabilities to ensure that production systems are not compromised by the data. Source to Target Testing (Validation Testing) Such type of testing is carried out to validate whether the data values transformed are the expected data values. Application Upgrades Such type of ETL testing can be automatically generated, saving substantial test development time. This type of testing checks whether the data extracted from an older application or repository are exactly same as the data in a repository or new application.

Metadata Testing Metadata testing includes testing of data type check, data length check and index/constraint check. Data Completeness Testing To verify that all the expected data is loaded in target from the source, data completeness testing is done.

Some of the tests that can be run are compare and validate counts, aggregates and actual data between the source and target for columns with simple transformation or no transformation. Data Accuracy Testing This testing is done to ensure that the data is accurately loaded and transformed as expected. Data Transformation Testing Testing data transformation is done as in many cases it cannot be achieved by writing one sourcequery and comparing the output with the target. Multiple SQL queries may need to be run for each row to verify the transformation rules. Data Quality Testing Data Quality Tests includes syntax and reference tests. In order to avoid any error due to date or order number during business process Data Quality testing is done.

Syntax Tests: It will report dirty data, based on invalid characters, character pattern, incorrect upper or lower case order etc. Reference Tests: It will check the data according to the data model. For example: Customer ID Data quality testing includes number check, date check, precision check, data check, null check etc. Incremental ETL testing This testing is done to check the data integrity of old and new data with the addition of new data. Incremental testing verifies that the inserts and updates are getting processed as expected during incremental ETL process. GUI/Navigation Testing This testing is done to check the navigation or GUI aspects of the front end reports. How to create ETL Test Case ETL testing is a concept which can be applied to different tools and databases in information management industry.

The objective of ETL testing is to assure that the data that has been loaded from a source to destination after business transformation is accurate. It also involves the verification of data at various middle stages that are being used between source and destination. While performing ETL testing, two documents that will always be used by an ETL tester are.

ETL mapping sheets:An ETL mapping sheets contain all the information of source and destination tables including each and every column and their look-up in reference tables. An ETL testers need to be comfortable with SQL queries as ETL testing may involve writing big queries with multiple joins to validate data at any stage of ETL.

ETL mapping sheets provide a significant help while writing queries for data verification. DB Schema of Source, Target: It should be kept handy to verify any detail in mapping sheets. ETL Test Scenarios and Test Cases. Test Scenario Test Cases Mapping doc validation Verify mapping doc whether corresponding ETL information is provided or not. Change log should maintain in every mapping doc. Validation.

Validate the source and target table structure against corresponding mapping doc. Source data type and target data type should be same. Length of data types in both source and target should be equal.

Verify that data field types and formats are specified. Source data type length should not less than the target data type length. Validate the name of columns in the table against mapping doc. Constraint Validation Ensure the constraints are defined for specific table as expected Data consistency issues. The data type and length for a particular attribute may vary in files or tables though the semantic definition is the same. Misuse of integrity constraints Completeness Issues. Ensure that all expected data is loaded into target table.

Compare record counts between source and target. Automation of ETL Testing The general methodology of ETL testing is to use SQL scripting or do “eyeballing” of data. These approaches to ETL testing are time-consuming, error-prone and seldom provide complete test coverage. To accelerate, improve coverage, reduce costs, improvedetection ration of ETL testing in production and development environments, automation is the need of the hour. One such tool is Informatica.

Most of the time many software testing guys are totally confused about Test Strategy and Test Plan Template. So I am writing this article for those who keen to learn about what is actual difference between Test Plan document & Test Strategy document. In this article I am concentrating on actual Sample Test Plan example and in next article I will share the What is Test Strategy document and why it is needed. Also I have added link to Download sample Test Plan in PDF format at the bottom of the article.

———————————————————–——————————————————— Sample Test Plan Test Plan Template Table of Contents: 1. INTRODUCTION 2. OBJECTIVE 3. SCOPE 3.1 Functions to be tested. 3.2 Functions not to be tested. 4. REFERENCES 5. TESTING PROCESS OVERVIEW 6. TEST STRATEGY 6.1 Testing Types Black box testing GUI Testing Integration Testing Functional Testing System Testing Performance Testing Stress Testing Security and Access control testing User acceptance testing Alpha testing 6.2 Tools 7.

TEST ENVIRONMENT 8. TEST SCHEDULE 9.

CONTROL PROCEDURE 10. ROLES AND RESPONSIBILITIES 11. DELIVERABLE 12. ENTRY CRITERIA 13. SUSPENSION CRITERIA 14.

RESUMPTION CRITERIA 15. EXIT CRITERIA 16. ACRONYMS ———————————————————–——————————————————— 1. INTRODUCTION has contracted with, India to design, development and testing the reports of their clients.

This document will address the different standards that will apply to the unit, integration and system testing of the specified application. The design, development and testing of these reports will be based on clients “Project Name” management project. Throughout the testing process we will be applying the test documentation specifications described in the IEEE Standard 829-1983 for Software Test Documentation. 2. OBJECTIVE Objective of Test plan is to define the various Testing strategies and Testing tools used for complete Testing life cycle of this project. 3. SCOPE The document mainly targets the GUI testing and validating data in report output as per Requirements Specifications provided by Client. 3.1 Functions to be tested.

Reports Output/Data 3. Report Setup/Locations 3.2 Functions not to be tested. Not other than mentioned above in section 3.1 4. REFERENCES. Guidelines provided by Client and their clients. Refer Guideline location: CompanynameProjectNameDesignGuidelines 5. TESTING PROCESS OVERVIEW 5.1 Test Process Test process followed by QA will be categorized in to 2 ways:. Process to be followed when sufficient time is available for QA.

Process to be followed when sufficient time is not available for QA A) Process to be followed when sufficient time is available for QA Understanding Requirements:. Requirement specifications will be sent by client. Understanding of requirements will be done by QA along with. Respective lead and developer and queries are raised if any. Raised queries will be sent by lead to client.

Response to queries will be sent by client. Preparing Test Cases: QA will be based on the requirement specifications. This will cover all scenarios for requirements. Preparing Test Matrix: QA will be preparing test matrix which maps test cases to respective requirement. This will ensure the coverage for requirements. Reviewing test cases and matrix:. Peer review will be conducted for test cases and test matrix by senior QA member in QA team.

In certain cases for e.g. Complex requirements, lead’s help will be taken for conducting review. Any comments or suggestions on test cases and test coverage will be provided by reviewer respective Author of Test Case and Test Matrix. Suggestions or improvements will be re-worked by author and will be send for approval.

Re-worked improvements will be reviewed and approved by reviewer Creating Test Data: Test data will be created by respective QA on client’s developments/test site based on scenarios and Test cases. Executing Test Cases:. Test cases will be executed by respective QA on client’s development/test site based on designed scenarios, test cases and Test data. Test result (Actual Result, Pass/Fail) will updated in test case document Defect Logging And Reporting: QA will be logging the defect/bugs in Bugzilla bug tracking tool found during execution of test cases and will assigned the Bug id generated by Bugzilla to respective test cases document. After this, QA will inform respective developer about the defect/bugs. Retesting And Regression Testing: Retesting for fixed bugs will be done by respective QA once it is resolved by respective developer and bug/defect status will be updated accordingly. In certain cases, regression testing will be done if required.

Deployment/Delivery:. Once all bugs/defect reported after complete testing is fixed and no other bugs are found, report will be deployed to client’s test site by developer.

Once round of testing will be done by QA on client’s test site if required. Report will be delivered along with sample output by email to respective lead and Report group. QA will be submitting the filled hard copy of delivery slip to respective developer. Once lead gets the hard copy of delivery slip filled by QA and developer, he will send the report delivery email to client.

B) Process to be followed when sufficient time is not available for QA Understanding requirement:. Requirement specification will be sent by client. Understanding of requirements will be done by QA along with respective lead and developer and queries are raised if any. Raised quires will be sent by lead to client. Response to queries will be sent by client Creating test data: Test data will be created by respective QA on client’s development/test site based on scenarios and test cases.

Executing test scenarios: QA will be doing adhoc testing based on requirements and test scenarios. Defect logging and reporting: QA will be logging the defects/bugs in bug tracking tool found during executing the test. After this, QA will inform respective developer about the defect/bugs. Retesting and regression testing: Retesting for fixed bugs will be done by respective QA once it is resolved by respective developer and bug/defect status will be updated accordingly.

In certain cases, regression testing will be done if required Deployment/delivery:. Once all bugs/defects reported after complete testing are fixed and no other bugs are found, report will be deployed to client’s test site by developer. One round of testing will be done by QA on client’s test site if required. Report will be delivered along with sample output by email to respective lead and report group. QA will be submitting the filled hard copy of delivery slip to respective developer.

Once lead gets the hard copy of delivery slip filled by QA and developer, he will send he report delivery email to Client. 5.2 Data creation for testing. QA will create test data on development site for scenarios based on client’s requirements specifications. 5.3 Bug life cycle: All the issues found while testing will be logged into Bugzilla bug tracker.

Bug life cycle for this project is as follows: Bug Life Cycle Image Credit: 6. TEST STRATERGY 6.1 Testing types It is some time called behavioral testing or Partition testing. This kind of testing focuses on the functional requirements of the software. It enables one to derive sets of input conditions that that will fully exercise all functional requirements for a program. GUI Testing: GUI testing will includes testing the UI part of report.

It covers users Report format, look and feel, error messages, spelling mistakes, GUI guideline violations. Integration Testing: Integration testing is systematic technique for constructing the program structure while conducting test to uncover errors associated with interacting. In Report, integration testing includes the testing Report from respective location(s). Functional testing is carried out in order to find out unexpected behavior of the report. The characteristic of functional testing are to provide correctness, reliability, testability and accuracy of the report output/data.: System testing of software is testing conducted on a complete, integrated system to evaluate the system’s compliance with its specified requirements. Performance Testing: Performance testing will be done by Client Stress Testing: Stress testing will be done by Client. Security and Access control testing.

Not Applicable as this is already done by Client.: The purpose behind user acceptance testing is to conform that system is developed according to the specified user requirements and is ready for operational use. Acceptance testing is carried out at two levels – Alpha and Beta Testing. User acceptance testing (UAT) will be done at the Client.

Alpha testing: The alpha test is conducted at the developer’s site by client. Tool Name Vender Version Microsoft SQL Server 2005 Microsoft 9.00.3042.00 Bugzilla Selenium Open Source Cisco VPN Client CISCO 7. TEST ENVIRONMENT Server Name URL Machine: QA-Admin-site QA-Admin-site/login.aspx Machine: QA-Reporting-site QA-Reporting-site/login.aspx Machine: QA-Database – 8. TEST SCHEDULE Planning Phase: High-level test planning activities, which include preliminary development of Master QA Plan (this document, QA schedule. At this Milestone, the high level planning should be completed. Some of the deliverable are: Project Plan, Program function specifications. Design Phase: Development and Test engineers participate actively in feature design by inspecting and reviewing the requirements and design documents.

As the design documents are completed, the test engineers are encouraged to start working on the Test Plan document and test design planning. Code Complete-Infrastructure: The Test Engineers should have completed or in the final stages of their preliminary Infrastructure Test Plan, test cases and other QA documents related to test execution for each feature or component such as test scenarios, expected results, data sets, test procedures, scripts and applicable testing tools. Code Complete-Function: The Test Engineers should have provided Code Complete Assessment Test to Development Engineer one week prior to Code Complete Review date. The Test Engineers should also have completed or in the final stages of their preliminary White Box Test Plan, test cases and other QA documents related to test execution for each feature or component such as test scenarios, expected results, data sets, test procedures, scripts and applicable testing tools. Feature Complete: All bugs verified and QA documentation is finalized.

The test Engineers should assess that Binary Tree features are ready for Beta regression and have started their preliminary Test Summary Reports. Regression Test: Complete regression test execution of complete system and update Test Summary Reports for regression. Beta Ready: 2 Weeks regression of Binary Tree features to Beta and preparation for Beta Shutdown.

Ship/Live: Any unfinished Testing documents should be complete. CONTROL PROCEDURE 9.1 Reviews: Reviews will be done on following documents and review report will be prepare for each work products. Test cases. RTM(Requirement Traceability Matrix) 9.2 Bug Review Meetings: Bug review meeting will be held for every test cycle conducted during the following phases:-. GUI Testing. Report Output/Data Testing In case of critical / show stoppers bugs. 9.3 Change Request: Change request for report will be handled using following process:.

Understanding the change request and its impact on exiting report functionality. If the change is major, test cases will be updated. If the change is minor, test cases will may not be updated. Retesting and regression testing will be done as per changed request 9.4 Defect Reporting: Bugs found during static and dynamic testing will be logged in Bugzilla bug tracking tool. ROLES AND RESPONSIBILITIES Role Responsibilities PM. Acts as a primary contact for development and QA team. Responsible for Project schedule and the overall success of the project.

QA. Understand requirements. Writing and executing Test cases. Preparing RTM.

Reviewing Test cases, RTM. Defect reporting and tracking. Retesting and regression testing. Bug Review meeting 11. DELIVERABLE Deliverable Responsibility Test Design DocumentTest plan document a) Unit white-box test design – covers white testing criteria, methods and test casesb) System test design – covers system test criteria, methods, and test cases, scripts.c) Unit black-box test design – covers black-box testing criteria, methods and test cases. Test report document a) System Test report – covers system test results, problems, summary and analysisb) Unit white-box test report – covers unit white box test results, problems, summary and analysisc) Unit black-box test report – covers unit black box test results, problems, summary and analysis 12. ENTRY CRITERIA.

The whole source code must be unit tested H/W and S/W should be in place. QA resources have completely understood the requirements. QA resources have sound knowledge of functionality in Reports. Reviewed test scenarios, test cases and RTM 13.

SUSPENSION CRITERIA. The build contains many serious defects which seriously or limit testing progress. Significant change in requirements suggested by client. Software/Hardware problems. Assigned resources are not available when needed by test team. RESUMPTION CRITERIA. Resumption will only occur when the problem(s) that caused the caused the suspension have been resolved 15.

EXIT CRITERIA. No defects over a period of time or less testing efforts. All the high priority/severity test cases has been executed.

Deliverables are ready. High severity/ priority bugs are fixed 16. RISK.

Delay in delivery of test items might require increased night shift scheduling to meet the delivery date. Understanding requirements.

Domain and project knowledge 17. ACRONYMS. GUI: Graphical User Interface. RTM: Requirement Traceability Matrix You can also here. If you really like the Test Plan Template then feel free to share to your friends who really need it. Also if you think I need to cover any point in this article or any query on this then add in the comments below & I will definitely try to answer your query.